- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #17

ScholarSphere Newsletter #17

Where AI meets Academia

Welcome to 17th edition of ScholarSphere

“If we had a characteristica universalis, we should be able to reason in metaphysics and morals in much the same way as in geometry and analysis.” Leibniz

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

Towards Model-Based Reinforcement Learning on Real Robots |

AI & Engineering |

By Georg Martius

Summarized by NotGPT Youtube Video Summarizer

Summary

Georg Martius discusses advancements in model-based reinforcement learning for real robots, focusing on adaptive strategies and safety in robotics.

Highlights

🤖 Martius leads a research group on autonomous learning at the Max Planck Institute.

📈 Reinforcement learning has achieved successes in robotics but often relies on inefficient simulations.

🔄 Model-based reinforcement learning may provide faster learning and adaptation for robots.

🎯 The cross-entropy method helps optimize action sequences for robotic tasks.

🛡️ Understanding uncertainties is crucial for safe robot operations in real environments.

🧩 Combining planning with learning can enhance robotic adaptability and performance.

🚀 Future applications aim to implement these methods in real-world robotic systems.

Key Insights

🤔 Reinforcement Learning Challenges: Current reinforcement learning methods often require extensive data and simulations, limiting their effectiveness in real-world applications. This highlights the need for more efficient approaches to robotic learning.

⚙️ Model-Based Learning Advantages: Utilizing model-based reinforcement learning can significantly reduce training time and improve adaptability, allowing robots to better handle dynamic environments and tasks.

📊 Cross-Entropy Method: This sample-based optimization technique can enhance robotic performance by efficiently exploring action sequences, resulting in quicker and more effective learning processes.

⚖️ Importance of Uncertainty: Differentiating between aleatoric and epistemic uncertainties is vital for developing robust robots that can safely navigate unpredictable environments and make informed decisions.

🔄 Adaptive Strategies: Implementing adaptive policy extraction methods allows robots to refine their decision-making processes, improving performance in complex tasks while minimizing compounding errors.

🏗️ Safety-Aware Planning: By incorporating safety constraints into planning algorithms, robots can better avoid hazardous situations, enhancing their usability in sensitive real-world applications.

🌍 Future Directions: Continuing to develop these advanced reinforcement learning models can lead to significant breakthroughs in robot autonomy, enabling them to assist in diverse tasks, from assembly to healthcare.

You can also find extra teaching articles in our LinkedIn Page.

Mastering AI: Prompt Perfection

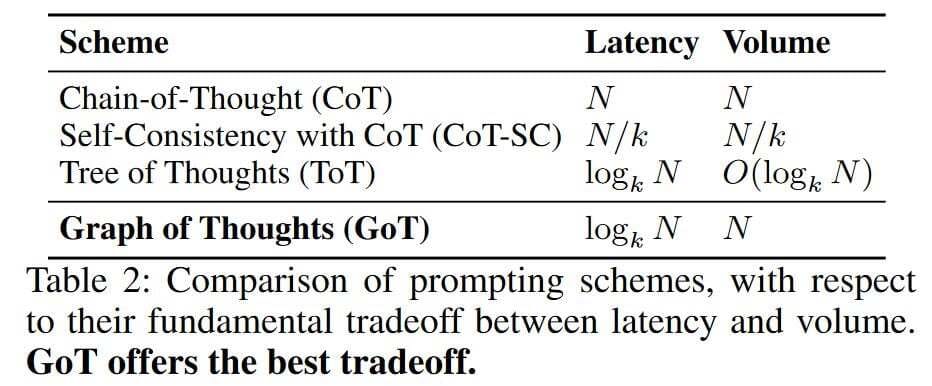

What is the Difference Between Tree-of-Thought (ToT) Prompting and Graph-of-Thought (GoT) Prompting? Which is Better and Why?

By aman.ai

Disadvantages of CoT:

CoT prompting fails to solve problems that benefit from planning, strategic lookahead, backtracking, and exploration of numerous solutions in parallel.

Structural Approach:

Tree-of-Thought (ToT) prompting structures the reasoning process as a tree where each node represents a “thought” or a simpler sub-problem derived from a more complex problem. Put simply, ToT prompting breaks a complex problem into a series of simpler problems (or “thoughts”). The solution process involves branching out into these simpler problems, allowing for strategic planning, lookahead, and backtracking. The LLM generates many thoughts and continually evaluates its progress toward a final solution via natural language (i.e., via prompting). By leveraging the model’s self-evaluation of progress towards a solution, we can power the exploration process with widely-used search algorithms (e.g., breadth-first search or depth-first search), allowing for lookahead/backtracking when solving a problem.

Graph-of-Thought (GoT) prompting, on the other hand, generalizes research on ToT prompting to graph-based strategies for reasoning. GoT thus uses a graph structure instead of a tree. This means that the paths of thought are not strictly linear or hierarchical. Thoughts can be revisited, reused, or even form recursive loops. This approach recognizes that the reasoning process may require revisiting earlier stages or combining thoughts in non-linear ways.

Flexibility and Complexity:

ToT is somewhat linear in its progression, even though it allows for branching. This makes it suitable for problems where a structured breakdown into sub-problems can lead logically from one step to another until a solution is reached.

GoT provides greater flexibility as it can accommodate more complex relationships between thoughts, allowing for more dynamic and interconnected reasoning. This is particularly useful in scenarios where the problem-solving process is not straightforward and may benefit from revisiting and re-evaluating previous thoughts. GoT thus makes no assumption that the path of thoughts used to generate a solution is linear. We can re-use thoughts or even recurse through a sequence of several thoughts when deriving a solution. - Which is better and why? Choosing between ToT and GoT prompting depends on the nature of the problem at hand:

For problems that are well-suited to linear or hierarchical breakdowns, where each sub-problem distinctly leads to the next, ToT prompting might be more effective and efficient. It simplifies the problem-solving process by maintaining a clear structure and path.

For more complex problems where the solution might require revisiting earlier ideas or combining insights in a non-linear fashion, GoT prompting could be superior. It allows for a more robust exploration of potential solutions by leveraging the flexibility and interconnectedness of thoughts in a graph.

Note that ultimately, neither method is inherently “better” as their effectiveness is context-dependent. The choice between them should be guided by the specific requirements and nature of the problem being addressed. Additionally, the criticism of both methods regarding their practicality due to the potential for a high number of inference steps indicates that there are still challenges to overcome in deploying these techniques efficiently.

On a related note, these tree/graph-based prompting techniques have been criticized for their lack of practicality. Solving a reasoning problem with ToT/GoT prompting could potentially require a massive number of inference steps from the LLM!

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

A new Cengage Group report shows recent college graduates across the board have concerns about keeping pace with generative artificial intelligence. Cengage Group

Credit to AnalyticsInside

Join ScholarSphere Now!

Spotlight on AI Tools for Academic Excellence

NaturalReader: AI Text to Speech Have any type of text read aloud with the most natural AI voices.

QuizGecko: An AI-powered platform that generates quizzes from existing content

SEO.ai: The top AI Writer for SEO, generating high-quality SEO keyword research and copywriting.

SEO.ai Homepage

Numerous.ai: An AI plugin that brings ChatGPT to spreadsheets, allowing users to extract data and use ChatGPT.

For finding more selected ai apps, please subscribe to ScholarSphere Newsletter Series

Reply