- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #18

ScholarSphere Newsletter #18

Where AI meets Academia

Welcome to 18th edition of ScholarSphere

“A sign of intelligence is an awareness of one’s own ignorance.” Niccolo Machiavelli (3 May 1469 – 21 June 1527)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

Meta AI has introduced LLama 3.1, a groundbreaking 405 billion parameter open-source language model designed to push the boundaries of natural language processing (NLP). LLama 3.1 is a significant upgrade from its predecessors, featuring enhanced capabilities for understanding and generating human language with remarkable accuracy. The model's architecture leverages deep learning techniques and extensive training on diverse datasets, making it capable of handling a wide range of linguistic tasks. Key points of the article include the detailed breakdown of the model's architecture, its training process, and the potential applications in various industries. The model's open-source nature also allows for widespread adoption and customization, fostering innovation in the AI community. The article emphasizes the importance of such advancements in enhancing human-computer interactions and automating complex tasks.

One of the standout features of LLama 3.1 is its ability to perform complex reasoning and comprehension tasks, thanks to its massive parameter count and sophisticated training methodology. For example, the model can accurately summarize lengthy documents, provide detailed answers to user queries, and even generate creative content such as poetry and stories. The training process involved feeding the model a vast corpus of text from diverse sources, ensuring it learns the subtleties and nuances of human language. The model's performance has been benchmarked against existing state-of-the-art models, demonstrating superior results in various NLP tasks. Additionally, the model's robustness and reliability make it suitable for deployment in critical applications, such as customer service automation, content creation, and advanced data analysis.

The article also highlights several practical applications of LLama 3.1 across different sectors. In healthcare, for instance, the model can assist in diagnosing medical conditions by analyzing patient data and research articles. In the legal field, it can help draft legal documents and provide case summaries, significantly reducing the time and effort required by professionals. The education sector can benefit from LLama 3.1 by using it to develop intelligent tutoring systems that provide personalized learning experiences for students. Furthermore, businesses can leverage the model for market analysis, generating insights from large datasets and improving decision-making processes. The open-source nature of LLama 3.1 encourages developers and researchers to explore innovative uses, driving the evolution of AI technology.

During the Q&A session, the interviewer posed several insightful questions about the development and future of LLama 3.1. One question focused on the ethical considerations of deploying such powerful AI models, to which the speaker responded by emphasizing the importance of responsible usage and transparency. Another question addressed the potential for bias in the model, and the speaker explained the measures taken to mitigate bias during the training process. The interviewer also inquired about the scalability of LLama 3.1, and the speaker highlighted the model's flexible architecture that allows it to be scaled and adapted for various applications. Lastly, the discussion touched on the future directions for LLama 3.1, with the speaker expressing optimism about continuous improvements and the integration of additional capabilities. These questions and answers provide a comprehensive understanding of the model's impact and its potential to revolutionize the field of NLP.

You reading the full article in Datacamp Click Here.

You can also find extra teaching articles in our LinkedIn Page.

Mastering AI: Prompt Perfection

Reducing Latency with Skeleton of Thought (SoT) Prompting

By PromptHub

Summarized by Perplexity.ai

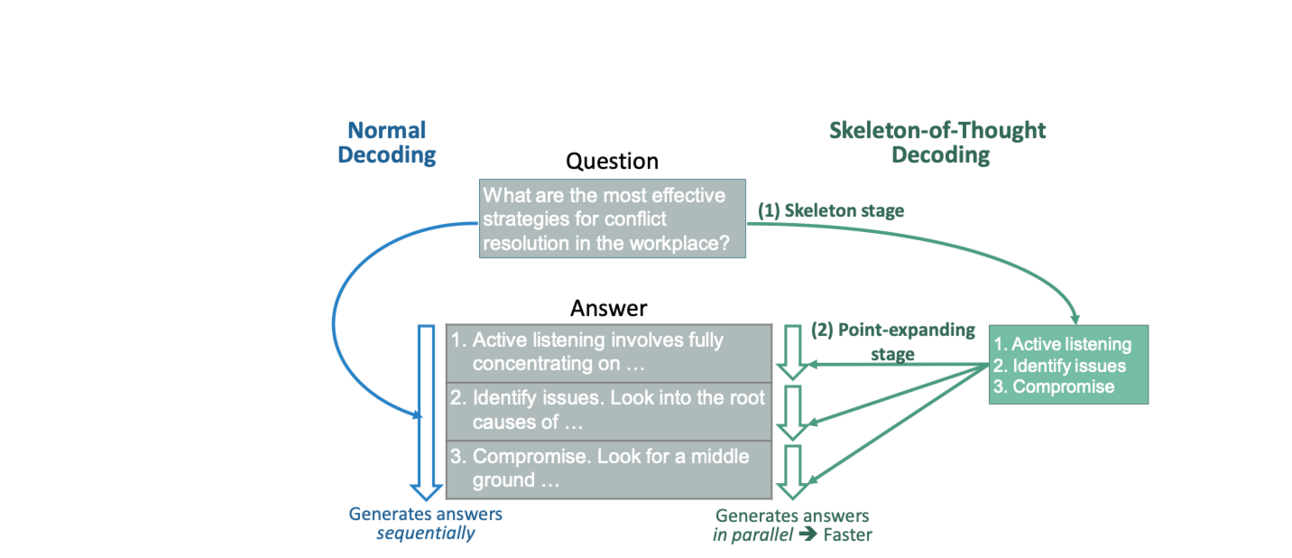

The Skeleton of Thought (SoT) prompting technique is designed to reduce latency in large language model (LLM) outputs by breaking down the task into two main stages: creating a structured skeleton and then expanding on each point in parallel. This method is particularly useful for tasks that require efficient and structured responses. The SoT framework begins with a "skeleton prompt," where the model is instructed to generate an outline or bullet points of the intended answer. This initial step ensures that the model organizes its thoughts before diving into the details. Once the skeleton is created, the model moves to the "point-expanding stage," where each bullet point is elaborated on simultaneously, rather than sequentially. This parallel processing significantly reduces the time taken to generate comprehensive responses.

Credit to PromptHub.us

The effectiveness of SoT was tested through various experiments, which demonstrated its ability to reduce end-to-end latency across different models and question types. The researchers used the Vicuna-80 dataset, which includes 80 questions spanning nine categories, and tested 11 models, including both open-source and API-based models. The results showed that SoT could improve the diversity and relevance of outputs while maintaining a structured approach. However, it was noted that SoT might negatively impact the coherence and immersion of the responses because the segmented approach can lead to disjointed answers. Despite these drawbacks, the technique shows promise in scenarios where speed and structured output are prioritized.

An example of how SoT works can be illustrated through a practical scenario. Suppose the task is to describe the process of photosynthesis. The skeleton prompt would generate key points such as "Photosynthesis occurs in plants," "Involves converting light energy to chemical energy," and "Creates glucose and oxygen." In the point-expanding stage, each of these points would be elaborated on in parallel, discussing light absorption, the role of chlorophyll, the Calvin cycle, and oxygen release. This approach ensures that the model covers all aspects of the topic thoroughly and quickly, as multiple parts of the answer are crafted simultaneously.

While SoT offers a structured approach to problem-solving, it is not suitable for all scenarios. The transition from sequential to parallel processing might require a shift in system design or additional resources. Additionally, the segmented nature of SoT can sometimes lead to a lack of coherence in the final output. Nevertheless, the potential benefits in terms of reduced latency and structured responses make it a valuable technique in the realm of prompt engineering. Combining SoT with other methods could help mitigate its drawbacks and harness the best of both worlds, providing efficient and high-quality outputs.

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

Syracuse University Maxwell School of Citizenship & Public Affairs:

Sultana Comments on Academic Publishers Partnering With AI Companies in Chronicle of Higher Ed Piece

NextGov FCW (By Alexandra Kelley, Staff Correspondent):

Education Dept. offers guidance on developing AI for the classroom

J. David Ake/Getty Images

UNC School of Medicine:

Researchers Explore Generative AI Benefits and Shortfalls in Medical Education

Third-year UNC School of Medicine student Joshua Hale

Oxford dons have been lobbying against digital assessment over fears that students could cheat using chatbots like Chat GPT

Paper of the week: Skeleton-of-Thought: Prompting LLMs for Efficient Parallel Generation by Xuefei Ning, et al. (2023)

Spotlight on AI Tools for Academic Excellence

Omniscience: 10x your research & writing workflow with Omniscience, The creative AI that writes books & papers: Omniscience retrieves and generates writing using context from your uploaded documents, books, websites, PDFs, and more

Quino AI: Summarize your PDFs, presentations and textbooks and in seconds, Accelerate your learning! Get instant insights and ask questions from your documents with AI. Drag and drop or choose your file Upload a .pdf, .docx, .doc, or .txt file to get your summary; for Students Professionals Researchers.

Weet: AI-powered video recording and editing for interactive training videos; Record your screen and webcam, or upload your existing videos. Deliver quickly professional-looking interactive videos.

PortfolioPilot: AI financial advisor for better investing decisions; 360° portfolio analysis and hyper-personalized recommendations guided by our Economic Insights Engine. Improve your investing strategy with unbiased advice:

CoinTrack.ai: Track your cryptocurrency assets from one place; a simple and secure crypto portfolio tracker which manages your DeFi assets.

CryptoHopper: The world’s most customizable crypto trading bot

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply