- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #19

ScholarSphere Newsletter #19

Where AI meets Academia

Welcome to 19th edition of ScholarSphere

“It takes something more than intelligence to act intelligently.”

Fyodor Dostoevsky (1821-1881)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

What is a Foundation Model?

By AdaLovelaceInstitute

Summarized by Perplexity.com

Foundation model

AI model trained on broad data for wide use cases

Definition: An AI model trained on extensive datasets enabling application across diverse domains.

Significance: Has transformed AI by powering chatbots, generative AI, and other technologies.

Origin: Term popularized by the Stanford Institute for Human-Centered Artificial Intelligence's Center for Research on Foundation Models (CRFM).

Resource Intensity: Development is highly resource-intensive, with top models costing hundreds of millions of dollars.

Early Examples: Include Google's BERT and OpenAI's GPT-n series for language, as well as DALL-E for images and MusicGen for music.

Fields of Application: Used in various modalities beyond text, including robotics, astronomy, radiology, genomics, music, coding, and mathematics.

Foundation models are large-scale machine learning models designed to be versatile and adaptable, capable of performing a wide variety of tasks. These models are trained on extensive datasets, often using self-supervision at scale, allowing them to generalize across multiple applications. The term "foundation model" was popularized by researchers at the Stanford Institute for Human-Centered Artificial Intelligence in 2021. Examples of foundation models include OpenAI’s GPT-3.5 and GPT-4, which power applications like ChatGPT and Bing Chat. These models can handle various inputs, such as text, images, and audio, making them multimodal. They serve as the basis for many AI applications, including image generation tools like Midjourney and Adobe Photoshop’s generative fill tools. The development of foundation models requires significant computational resources and large datasets, often sourced from the internet.

Foundation models are built on deep neural network architectures and trained on diverse, unlabeled datasets, which makes them highly adaptable. Traditional AI models are usually designed for specific tasks and require curated datasets, but foundation models can be fine-tuned for various applications without needing new training data. This adaptability is achieved through transfer learning, where the model applies patterns learned from one task to another. For instance, GPT-4, a language model, can be adapted for tasks like text summarization, classification, and even code generation. The versatility of foundation models has led to their widespread use in natural language processing (NLP), computer vision, and other AI fields. They have revolutionized AI by enabling more generalized and scalable solutions.

One timeline describes the path from early AI research to ChatGPT. (Source: blog.bytebytego.com)

The applications of foundation models are vast and varied, spanning multiple domains. In natural language processing, models like BERT and GPT-4 have advanced text generation, classification, and summarization. In computer vision, models such as CLIP and Kosmos-2 bridge the gap between images and text, enhancing tasks like object detection and image classification. Foundation models are also used in code generation, with tools like GitHub Copilot and Google's Codey assisting programmers by understanding and generating code. Additionally, speech-to-text applications benefit from foundation models that can transcribe audio from multiple languages. These models have opened new frontiers in AI, making it easier for organizations to leverage pre-trained models for specific tasks without extensive machine learning expertise.

Despite their benefits, foundation models also present challenges and limitations. The scale of data and computational resources required for training these models is immense, often costing hundreds of millions of dollars. Moreover, the segmented nature of foundation models can sometimes lead to a lack of coherence in the final output. There are also concerns about the ethical implications of using large datasets scraped from the internet, which may include biased or sensitive information. To address these issues, researchers emphasize the importance of balanced datasets and robust training methods. The development of foundation models continues to evolve, with ongoing research aimed at improving their efficiency, scalability, and ethical use. Combining foundation models with other AI techniques could further enhance their capabilities, providing more comprehensive and reliable solutions.

Table: Examples of Foundation Model Applications

Application Domain | Example Models | Use Cases |

|---|---|---|

Natural Language Processing (NLP) | Text generation, classification, summarization | |

Computer Vision | Object detection, image classification | |

Code Generation | Code writing, debugging, structured programming | |

Speech-to-Text | Universal Speech Models | Transcribing audio from multiple languages |

Image: Foundation Model Workflow

Foundation Model Workflow

This image illustrates the workflow of foundation models, from data collection and training to fine-tuning and application deployment. It highlights the stages involved in developing and utilizing these versatile AI models.

You reading the full article in Ada Lovelace Institute Click Here.

You can also find extra teaching articles in our LinkedIn Page.

Mastering AI: Prompt Perfection

Chain-of-Verification Reduces Hallucination in Large Language Models

By Shehzaad Dhuliawala, et al. (2023)

Summarized by Perplexity.ai

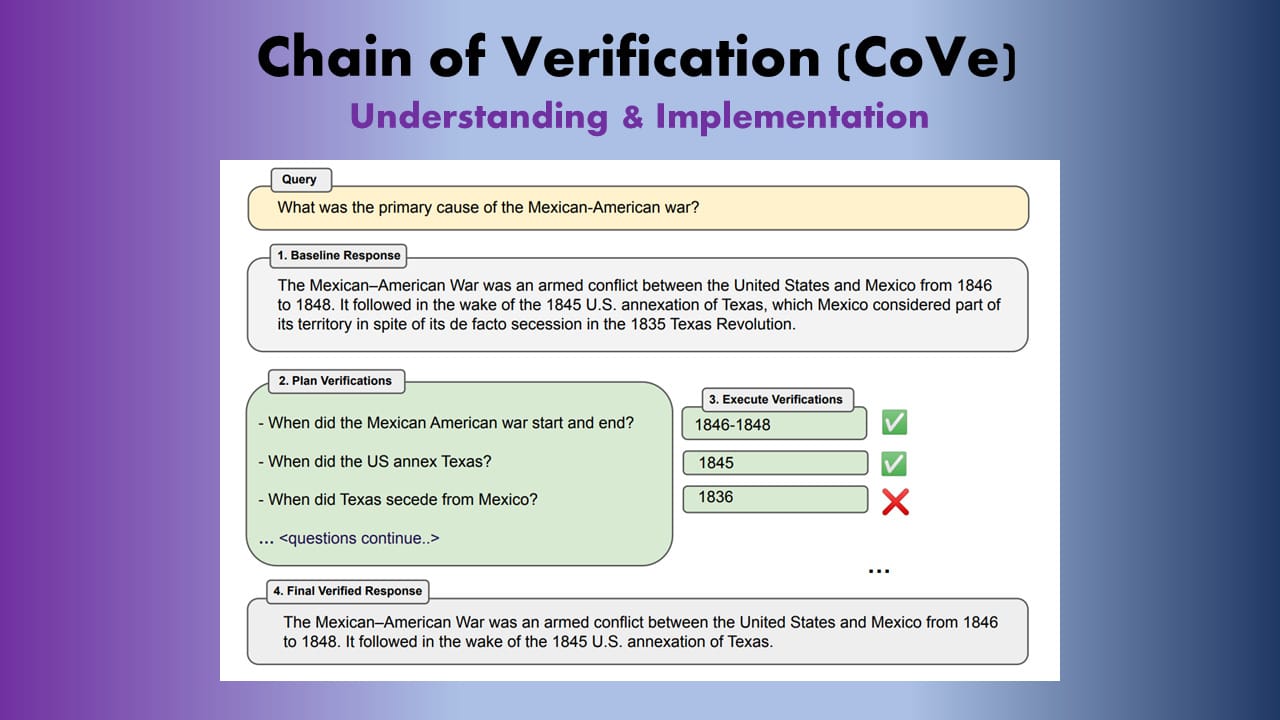

The Chain-of-Verification (CoVe) prompting technique addresses the issue of hallucination in large language models (LLMs), which refers to the generation of plausible but incorrect information. CoVe operates through a structured four-step process: it first generates a baseline response to a query, then formulates verification questions aimed at fact-checking the initial response, subsequently answers those questions independently, and finally generates a verified response based on the findings. This method allows the model to critically assess its own outputs, thereby enhancing the accuracy of the information provided. The authors conducted experiments across various tasks, including list-based questions and long-form text generation, and found that CoVe significantly reduces hallucinations compared to traditional approaches. The research highlights the potential of self-verification in improving the reliability of LLM outputs. By separating the verification steps, CoVe mitigates the risk of repeating errors from the baseline response.

Picture Credit to Sourajit Roy Chowdhury on Medium.com

CoVe's methodology is built upon the premise that LLMs can engage in self-analysis and reasoning, which is crucial for correcting inaccuracies. The first step involves generating a baseline response, which serves as the initial answer to the user's query. Following this, the model creates a set of verification questions tailored to the claims made in the baseline response. For example, if the baseline states a historical fact, the verification question might ask for specific dates or events related to that fact. This planning phase is critical as it sets the stage for the subsequent verification process. In the execution phase, the model independently answers each verification question, allowing it to assess the accuracy of its original claims without bias. Finally, the model synthesizes the results of the verification process into a refined response that incorporates any necessary corrections.

The effectiveness of CoVe was tested using various benchmarks, including list-based questions from the Wikidata API and the MultiSpanQA reading comprehension task. In these experiments, CoVe demonstrated a marked improvement in precision and accuracy over baseline models, particularly in generating correct lists of entities or answering complex questions. For instance, when tasked with identifying politicians born in a specific city, CoVe was able to produce a more accurate and comprehensive list than models that did not employ the verification process. The authors also explored different variants of the CoVe method, such as joint and factored approaches, to optimize performance further. The factored approach, which answers verification questions independently, proved particularly effective in reducing the likelihood of repeating hallucinations. Overall, CoVe presents a promising advancement in the quest for reliable outputs from LLMs.

Despite its advantages, CoVe is not without limitations. The computational cost associated with generating and verifying multiple responses can be significant, especially when dealing with complex queries requiring extensive verification. Additionally, while CoVe improves accuracy, it does not entirely eliminate the possibility of hallucinations, particularly if the underlying model is prone to errors. The authors emphasize the importance of ongoing research in refining these techniques and exploring their applicability across various domains. Future work may involve integrating external verification tools or datasets to enhance the accuracy of the verification process further. By combining CoVe with other strategies, researchers aim to create more robust LLMs capable of producing reliable and factually accurate information.

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

Paris 2024 serves as a general rehearsal for the AI agenda, setting the stage for future innovations.

Paper of the week: The moral psychology of artificial intelligence by Ali Ladak, et al. (2024)

Spotlight on AI Tools for Academic Excellence

Beacons.ai: A platform that provides creators with tools to build a comprehensive online presence, including customizable "Link in Bio" pages, full websites, and features like email marketing, online stores, and media kits. It offers a range of integrated apps for audience engagement, brand deal management, and business operations, all designed to be user-friendly and accessible without needing technical skills.

RefitResume: Create ATS-crushing resumes with the ease of a click with the power of LaTeX.

VideotoWord: Transcribe Video & Audio to Text Free Online; Easily convert video to text in your browser, add subtitles, and more

TapTalent: Your All-in-One AI-Powered Talent Ecosystem; Discover talent contacts effortlessly, run multi-channel outreach campaigns, post jobs across diverse platforms, screen candidates using powerful AI, and experience seamless end-to-end recruitment, all within one integrated solution.

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply