- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #20

ScholarSphere Newsletter #20

Where AI meets Academia

Welcome to 20th edition of ScholarSphere

“The ability to observe without evaluating is the highest form of intelligence”

Jiddu Krishnamurti (1895-1986)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

Multimodal AI Models: Understanding Their Complexity

By Addepto AI Data Consulting Co.

Summarized by ChatGPT GPT4-o

Picture Credit to Addepto.com

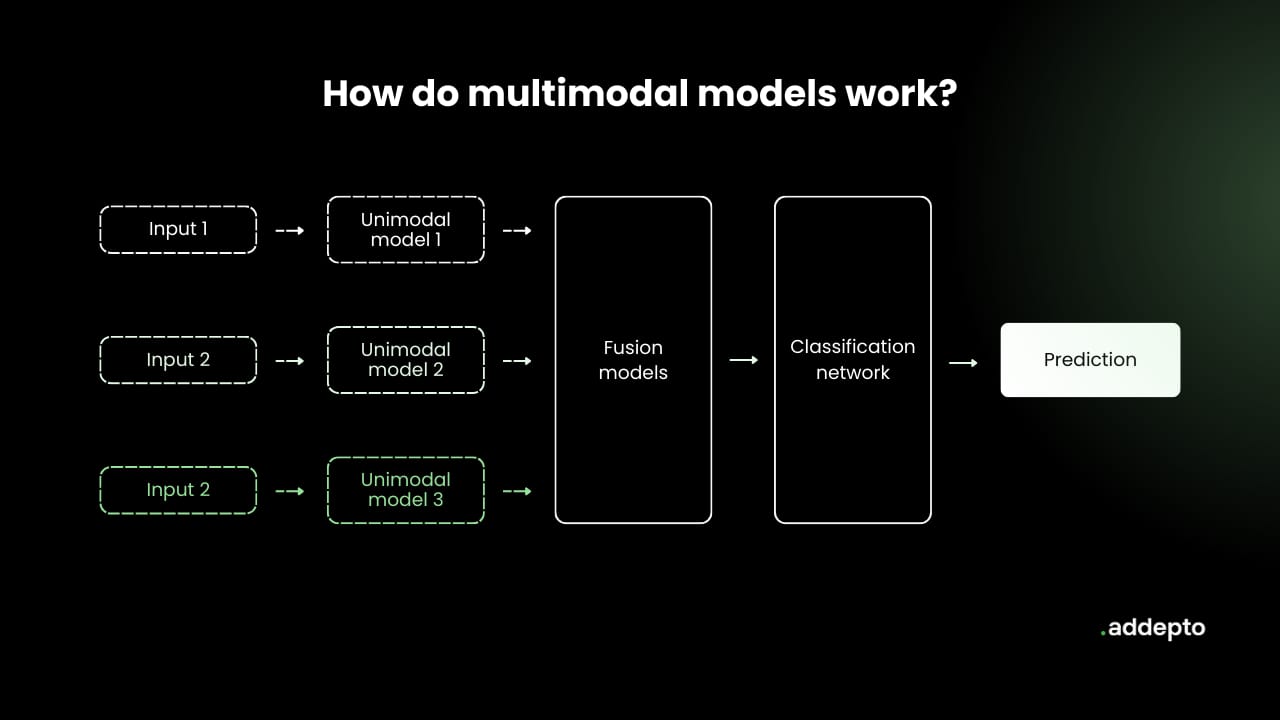

Multimodal AI refers to models that integrate and process multiple types of data—such as text, images, and audio—simultaneously to generate more comprehensive and accurate outputs. These models have various components that work together to process and fuse data from different modalities. For instance, one key element is the Fusion Module, which combines features from each modality into a unified representation, enhancing the model's ability to capture complex relationships between data types. Fusion techniques can be divided into early fusion, where data is merged at the feature level, and late fusion, where data is combined at the decision level.

Picture Credit to Addepto.com

Another important component is the Classification Module, which interprets the fused data to make predictions or decisions. Depending on the task, this module can take different forms, such as neural networks that apply multiple layers of processing to the unified representation. For example, in tasks like image captioning, the module might produce textual descriptions of visual content, while in sentiment analysis, it might determine the emotional tone of the input data.

Multimodal learning techniques are essential for training these models effectively. Techniques such as alignment-based approaches are used to synchronize different types of data, like aligning audio with visual inputs in sign language recognition. On the other hand, co-learning methods facilitate the transfer of knowledge between modalities, improving model performance when data for one modality is scarce or noisy. For instance, in audio-visual speech recognition, co-learning can help a model that understands spoken language to also improve its ability to read lips.

Despite their advantages, multimodal AI models face significant challenges, particularly in representation and fusion. Representing multimodal data in a unified way without losing crucial information is complex, as is combining data from different modalities to make accurate predictions. Moreover, developers must address issues like data bias, where one modality might dominate others, potentially leading to less accurate outcomes. Strategies like joint representation and coordinated representation help mitigate these issues, allowing for more robust and versatile multimodal models.

For a more in-depth understanding, you can explore the full article on Addepto's website.

Aimesoft is another company with Multimodal AI products. Here is a brief introduction inside the company website:

You can also find extra teaching articles in our LinkedIn Page.

Join us Now in ScholarSphere

Mastering AI: Prompt Perfection

Multimodal Chain-of-Thought Reasoning in Language Models

By Zhuosheng Zhang, et al. (2023)

Summarized by You.com

Multimodal Chain-of-Thought (Multimodal-CoT) reasoning integrates both language (text) and vision (images) into a two-stage framework for enhanced reasoning and answer inference. The existing Chain-of-Thought (CoT) methods, which mainly focus on the language modality, are extended to multimodal scenarios to address complex reasoning tasks. The proposed Multimodal-CoT separates the rationale generation from answer inference, leveraging multimodal information to generate more accurate rationales and mitigate issues like hallucination, thus enhancing convergence speed and overall performance. Experimental results on ScienceQA and A-OKVQA datasets demonstrate the effectiveness of this approach, achieving state-of-the-art performance with models under 1 billion parameters.

Key Points:

Multimodal-CoT Framework: Integrates text and image modalities into a two-stage process.

Enhanced Performance: Achieves state-of-the-art results on benchmark datasets with under 1 billion parameters.

Mitigating Hallucination: Reduces hallucination in generated rationales, leading to more accurate answer inference.

Open Source: Code is available at https://github.com/amazon-science/mm-cot.

The two-stage framework begins with rationale generation, where the model processes both visual and textual inputs to produce intermediate reasoning steps. In the subsequent stage, these rationales are used alongside the original inputs to infer the final answer. This method addresses the limitations of previous single-modality CoT approaches by providing a more comprehensive understanding through multimodal integration. The study's contributions include being the first to explore multimodal CoT in peer-reviewed literature and demonstrating the benefits of fine-tuning smaller models rather than relying solely on large language models (LLMs).

Key Points:

Two-Stage Process: Separates rationale generation from answer inference.

Fine-Tuning Smaller Models: Focuses on models under 1 billion parameters for efficiency.

First Study in Peer-Reviewed Literature: Pioneering research in multimodal CoT reasoning.

Practical Applications: Effective in real-world datasets like ScienceQA and A-OK

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

By Gencraft, mfgium77

At the end of summer, the boat is in the water. The style is hyper realistic cityscapes, Dutch landscape, dramatic skies, digital painting and drawing, majestic ports, mixed Josephine Wall and Andy Kehoe. The colors are glaucous, jonquil, mulberry, powder puff pink and ultramarine. The technique is a secret weapon. The atmosphere is dynamic. The light is cinematic. The dark is also very dark. The colors are high contrast. The boat is in the water.

bad anatomy, out of frame, duplicate, maniacal, blurry background, extra limbs, surprised, off-centered, poor quality, mutilated, uneven, dehydrated, crosseyed, creepy, fused, darkness, morbid, gibberish, lowres, poorly drawn face

Medium, Academic Web3 Conference: AI Goes to College & The Metaverse in Healthcare— Academic Weekly Web3 News

Concordia University of Edmonton (CUE): From Red Pandas to AI Innovations, and so much more at Summer Research Day

Paper of the week: Artificial Intelligence and Employment: New Cross-Country Evidence (2022) by Alexandre Georgieff and Raphaela Hyee

Spotlight on AI Tools for Academic Excellence

Autoresponder.ai: AI-powered auto-replies for messengers like WhatsApp, Telegram etc! Customize messages, set schedules & tailor replies. Revolutionize your chat experience!

Chatbond - AI Chatbot Builder: AI chatbot builder for instant customer responses; Response Customers Instantly With GPT-driven Chatbot

Prolific: Easily find vetted research participants and AI taskers at scale To deliver high-quality human-powered datasets – in less than 2 hours.

AIPRM: Prompt management tool and community-driven prompt library for generative AI; The Ultimate Time Saver for ChatGPT and other AI models!

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply