- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #21

ScholarSphere Newsletter #21

Where AI meets Academia

Welcome to 21th edition of ScholarSphere

“The mind is not a vessel to be filled but a fire to be kindled.”

Plutarch (AD 46 - AD 119)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

What is an Attention Mechanism?

By h2o.ai

An Attention Mechanism is a technique used in machine learning and artificial intelligence to improve the performance of models by focusing on relevant information. It allows models to selectively attend to different parts of the input data, assigning varying degrees of importance or weight to different elements.

How Attention Mechanisms Work

Attention mechanisms work by generating attention Weights for different elements or features of the input data. These weights determine the level of importance each element contributes to the model's output. The Attention Weights are calculated based on the relevance or similarity between the elements and a query or context vector. The attention mechanism typically involves three key components:

Query: Represents the current context or focus of the model.

Key: Represents the elements or features of the input data.

Value: Represents the values associated with the elements or features.

The attention mechanism computes the attention weights by measuring the similarity between the query and the keys. The values are then weighted by the attention weights and combined to produce the final output of the attention mechanism.

Picture Credit to Leewayhertz.com

Why Attention Mechanisms are Important

Attention mechanism is important in machine learning and artificial intelligence for several reasons:

Improved Model Performance: By focusing on relevant information, attention mechanism enables models to make more accurate predictions and capture important patterns or dependencies in the data.

Effective Handling of Variable-Length Inputs: Attention mechanism allows models to process inputs of variable lengths by attending to different parts of the input sequence dynamically.

Interpretability and Explainability: Attention weights provide insights into the model's decision-making process, making it easier to interpret and explain the model's predictions.

Attention Mechanism Use Cases

Attention mechanism finds applications in various domains and tasks, including:

Machine Translation: Attention mechanism helps models focus on relevant words or phrases in the source sentence while generating the target translation.

Text Summarization: Attention mechanism allows models to selectively attend to important parts of the input text for generating concise and informative summaries.

Image Captioning: Attention mechanism aids in focusing on different regions of an image while generating descriptive captions.

Speech Recognition: Attention mechanism assists in attending to specific acoustic or linguistic features during speech recognition tasks.

Question Answering: Attention mechanism helps models focus on relevant parts of the question and the context to generate accurate answers.

Other Technologies or Terms Related to Attention Mechanisms

There are several related technologies and terms in the field of machine learning and artificial intelligence:

Transformer: Attention mechanism is a fundamental component of the transformer architecture, which has been widely adopted for various tasks.

Recurrent Neural Networks (RNNs): RNNs also incorporate attention mechanisms to focus on relevant parts of the sequential input data.

Self-Attention: Self-attention is a variant of attention mechanism where the input elements are attended to within the same sequence, enabling the model to capture dependencies within the input itself.

Why H2O.ai Users Would be Interested in Attention Mechanisms

H2O.ai users would be interested in an attention mechanism as it provides a powerful technique for improving model performance and capturing relevant information in complex datasets. Attention mechanisms can enhance the accuracy and interpretability of models, enabling H2O.ai users to make better predictions and gain insights from their data.

Overall, attention mechanisms play a crucial role in machine learning and artificial intelligence by enabling models to focus on relevant information and improve their performance. Its applications span various domains and tasks, making it a valuable tool for data scientists and practitioners. H2O.ai users can benefit from incorporating attention mechanisms into their workflows to enhance the capabilities and outcomes of their machine-learning models.

You can also find extra teaching articles in our LinkedIn Page.

Join us Now in ScholarSphere

Mastering AI: Prompt Perfection

Mind's Eye of LLMs: Visualization-of-Thought Elicits Spatial Reasoning in Large Language Models

By Wenshan Wu, et al. (2024)

Summarized by You.com

The paper introduces the concept of Visualization-of-Thought (VoT) prompting, designed to enhance spatial reasoning in Large Language Models (LLMs) by visualizing their reasoning processes. Inspired by the human cognitive ability to create mental images, VoT aims to enable LLMs to generate mental visualizations, thereby improving their capacity to handle tasks requiring spatial understanding. The study focuses on spatial reasoning tasks, such as natural language navigation, visual navigation, and visual tiling in 2D grid worlds. VoT significantly improves LLMs' performance in these tasks, outperforming existing multimodal large language models (MLLMs). By integrating a visuospatial sketchpad, VoT allows LLMs to visualize reasoning steps and inform subsequent actions, resembling the human "mind's eye" process. This method demonstrates the potential of LLMs to generate mental images that facilitate complex reasoning.

Key Points:

Visualization-of-Thought (VoT) Prompting: Enhances spatial reasoning by visualizing intermediate reasoning steps.

Human Cognitive Inspiration: Based on humans' ability to create mental images for spatial reasoning.

Improved Task Performance: Outperforms existing MLLMs in spatial reasoning tasks.

Visuospatial Sketchpad: Used to visualize reasoning steps and guide actions.

VoT prompting involves visualizing the intermediate states of a task to guide the reasoning process, thus enabling LLMs to perform spatial reasoning tasks more effectively. The paper evaluates VoT's effectiveness across three tasks: natural language navigation, visual navigation, and visual tiling. It shows that VoT prompting allows LLMs to track their reasoning visually, providing a more grounded understanding of spatial relationships. The tasks require LLMs to understand spatial configurations and make decisions based on visualized reasoning steps, demonstrating the impact of VoT in enhancing spatial awareness. VoT also shows promise in improving LLMs' ability to simulate and manipulate mental images, crucial for spatial reasoning tasks.

Key Points:

Three Evaluation Tasks: Natural language navigation, visual navigation, and visual tiling.

Visual State Tracking: Enables LLMs to track reasoning steps visually, enhancing spatial awareness.

Grounded Understanding: Provides a more grounded approach to spatial relationships.

Simulation and Manipulation: Improves LLMs' ability to simulate and manipulate mental images.

The study finds that VoT prompting significantly outperforms other prompting methods, such as Chain-of-Thought (CoT), by inducing LLMs to visualize their internal states. The experiments reveal that VoT prompting leads to better performance across all tasks, particularly in challenging spatial reasoning scenarios. Despite its effectiveness, the study notes that VoT's performance is not yet perfect, especially in more complex tasks like route planning, indicating room for improvement. The research suggests that VoT can benefit less powerful language models by improving their spatial reasoning capabilities. It highlights the potential of VoT to scale up with advanced models, enhancing performance significantly as model complexity increases.

Key Points:

Superior Performance: VoT outperforms CoT in spatial reasoning tasks.

Challenges in Complex Tasks: Performance is not yet perfect in complex scenarios.

Benefits for Less Powerful Models: VoT can enhance spatial reasoning in smaller models.

Scalability: Potential to improve performance in more advanced models.

The paper concludes by emphasizing the potential of VoT in advancing the cognitive and reasoning abilities of LLMs. Future research aims to explore the application of VoT in multimodal models to enhance spatial awareness further. The study also highlights the need for automatic data augmentation from real-world scenarios to improve the internal representation of mental images. This work opens avenues for developing more complex and diverse representations, such as 3D semantics, to strengthen the "mind's eye" of LLMs. The authors acknowledge the limitations of current LLMs in visual state tracking and suggest that explicit instruction tuning can significantly improve performance. Overall, the study presents VoT as a promising technique for enhancing spatial reasoning in language models.

Key Points:

Future Applications: Exploring VoT in multimodal models and real-world scenarios.

Need for Diverse Representations: Developing complex representations like 3D semantics.

Limitations and Improvements: Current limitations in visual state tracking and potential improvements.

Promising Technique: VoT as a promising method for enhancing spatial reasoning.

Explanation of Visualization-of-Thought Prompting

Visualization-of-Thought (VoT) prompting is a technique designed to enhance the spatial reasoning capabilities of language models by encouraging them to visualize their reasoning processes. This method is inspired by the human cognitive ability to create mental images, which aids in understanding spatial relationships and making informed decisions.

Example:

Task: Navigate a grid from start to destination.

VoT Prompting: "Visualize the state after each reasoning step."

Process: As the model considers each move, it generates an internal visualization of the grid, marking its current position and potential paths.

Outcome: The model uses these mental images to decide the best route, simulating the navigation process visually.

By incorporating VoT prompting, LLMs can create interleaved reasoning traces and visualizations, allowing them to track their reasoning steps visually and improve their spatial understanding. This approach enhances the model's ability to perform tasks that require spatial awareness and reasoning, making it a valuable tool for complex navigation and planning tasks.

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

Jason Blomquist is an associate professor in Boise State’s School of Nursing.

University of Buffalo: UBIT's crucial role in National AI Institute for Exceptional Education launch

British Educational Research Association (BERA): Special Interest Group (SIG) Artificial and Human Intelligence

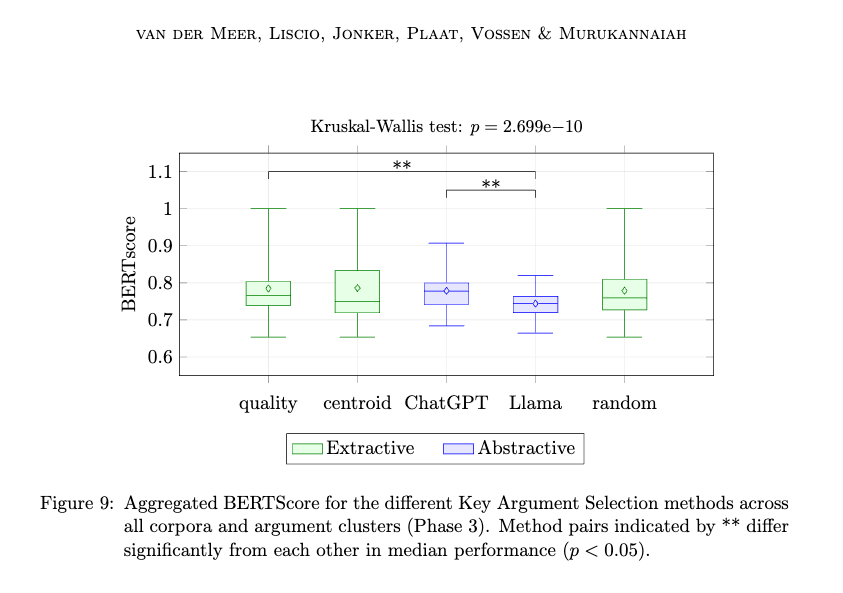

Paper of the week: A Hybrid Intelligence Method for Argument Mining by Michiel van der Meer, et al. (2024)

Spotlight on AI Tools for Academic Excellence

DataCamp: Learn Data Science & AI online at your own pace; Unlock the power of data and AI by learning Python, ChatGPT, SQL, Power BI, and earn industry-leading Certifications.

PTE APEUni: Pearson Test of English (PTE) is a computer-based academic exam with online mock tests available. Join APEUni to practice PTE for FREE and use AI scorings to evaluate your performance.

Glasp: A PDF & Web highlighter that helps you collect and organize your favorite quotes and thoughts from the web. You can also access other like-minded people’s learning and build your AI clone from your highlights and notes.

Smodin: A platform that improves writing with various tools for students, writers, and internet workers globally.

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply