- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #22

ScholarSphere Newsletter #22

Where AI meets Academia

Welcome to edition 22 of ScholarSphere

“Intelligence is quickness in seeing things as they are.”

George Santayana (1863-1952)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

Fusion Models: Comparative Analysis of Two Artificial Intelligence Based Decision Level Fusion Models for Heart Disease Prediction (Link)

By Hafsa Binte Kibria, et al. (2020)

Summarized by You.com (GPT4o)

The paper explores the use of artificial intelligence in healthcare, particularly focusing on machine learning models for predicting heart disease, which remains a leading cause of death worldwide. The study presents a fusion model to enhance the prediction performance by combining decision scores from different algorithms. Specifically, two fusion models are analyzed: one combining a deep neural network (DNN) with a decision tree (DT), and the other combining a DNN with logistic regression (LR). Tools like Jupyter Notebook, Scikit-learn, Tensorflow, and Keras were utilized to implement these models. Results indicated significant performance improvements in prediction accuracy, recall, F1-score, and AUC score after applying decision-level fusion. The fusion approach aims to integrate decisions from multiple models to enhance the overall classification accuracy for detecting heart disease. Model-2, which combines DNN and LR, achieved the highest classification accuracy of 87.12% using 10-fold cross-validation.

Key Points:

The study emphasizes the importance of early detection of cardiovascular diseases (CVDs) and presents AI as a promising tool for improving diagnosis accuracy. While traditional diagnostic methods like ECG and cholesterol tests are effective, they are costly and time-consuming. AI-driven systems, particularly those using machine learning, offer more precise results and reduced costs. This research contributes by introducing a decision-level fusion approach, which merges decisions from separate algorithms to improve classification accuracy. The proposed approach showed notable improvements over individual machine learning models, highlighting the effectiveness of fusing DNN with other algorithms. The fusion occurred at the decision level by aggregating decision scores, enhancing the model’s ability to predict the presence or absence of heart disease.

Key Points:

Early Detection Importance: AI improves CVD diagnosis accuracy and efficiency.

AI Advantages: More precise and cost-effective than traditional methods.

Decision-Level Fusion: Merges decisions from multiple algorithms.

Improved Classification: Fusion enhances prediction accuracy over individual models.

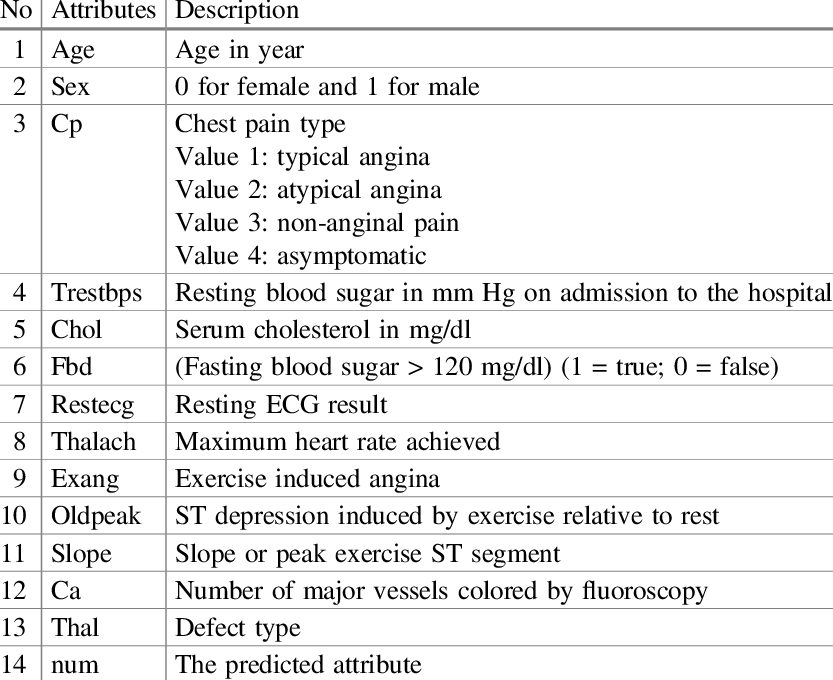

The paper details the methodology, including data collection from the UCI ML repository, preprocessing, feature extraction, and model implementation. The Cleveland dataset, containing 303 instances and 13 features, served as the basis for training and testing the models. Data preprocessing involved handling missing values and normalizing features using methods like label encoding and min-max normalization. The models were evaluated using five and ten-fold cross-validation to ensure robustness. The study’s primary objective was to form a fusion model that improves accuracy in classifying cardiovascular disease presence and absence. The DNN was used for its ability to learn through multiple hidden layers, while DT and LR served as complementary algorithms for decision fusion.

Key Points:

Data Source: Cleveland dataset from UCI ML repository.

Preprocessing: Handling missing values and normalizing features.

Model Evaluation: Five and ten-fold cross-validation for robustness.

Fusion Objective: Improve classification accuracy with combined models.

Performance evaluation showed that the fusion models, particularly DNN+LR, outperformed individual algorithms. The study compared the performance of these models against previous works using similar datasets, finding that their fusion approach provided better accuracy than standalone models. Results indicated that model-2 (DNN+LR) consistently yielded higher accuracy across different validation folds, proving the efficacy of decision-level fusion in improving diagnostic predictions. The authors suggest that this fusion model can be applied to other medical diagnoses, potentially combining more than two algorithms for even greater accuracy. The paper concludes by emphasizing the potential of machine learning in transforming healthcare, particularly in predictive diagnostics, and the advantages of fusion models in enhancing predictive performance.

Table 4 The Performance of Deep Neural Network and Logistic Regression (Model-2) with Five-fold Cross-Validation

Key Points:

Fusion Model Performance: DNN+LR outperformed individual models.

Comparison with Previous Work: Fusion approach provided better accuracy.

Model-2 Success: Consistently higher accuracy across validation folds.

Future Applications: Potential for broader use in medical diagnostics.

Explanation of Fusion AI

Fusion AI involves combining outputs from multiple artificial intelligence models to achieve improved results in tasks such as classification, prediction, or decision-making. This technique leverages the strengths of different models to enhance overall performance, especially in complex scenarios.

Fusion strategies using deep learning. Model architecture for different fusion strategies. Early fusion (left figure) concatenates original or extracted features at the input level. Joint fusion (middle figure) also joins features at the input level, but the loss is propagated back to the feature extracting model. Late fusion (right figure) aggregates predictions at the decision level.

Example:

Heart Disease Prediction: Fusion AI can integrate the decision scores of a deep neural network and logistic regression to improve the accuracy of predicting heart disease, as demonstrated in the paper.

Image Recognition: In image recognition tasks, combining convolutional neural networks (CNNs) with support vector machines (SVMs) can yield better classification results by utilizing CNNs' feature extraction capabilities and SVMs' classification strengths.

By aggregating multiple models' decisions, Fusion AI can reduce errors and increase robustness, making it a valuable approach in fields requiring high accuracy and reliability.

You can also find extra teaching articles in our LinkedIn Page.

Join us Now in ScholarSphere

Mastering AI: Prompt Perfection

Least-to-Most Prompting Enables Complex Reasoning in Large Language Models (Link)

Published as a conference paper at ICLR 2023 By Denny Zhou, et al. (2023)

Summarized by You.com

The paper introduces a novel prompting strategy called Least-to-Most (L2M) prompting, designed to enhance the complex reasoning capabilities of large language models (LLMs). The key idea is to break down a complex problem into a series of simpler subproblems and solve them sequentially, where each subproblem is solved using information from previously solved subproblems. This approach contrasts with Chain-of-Thought (CoT) prompting, which often struggles with tasks requiring generalization to problems more complex than the examples provided. Experimental results demonstrate that L2M prompting significantly improves performance in tasks related to symbolic manipulation, compositional generalization, and mathematical reasoning. Notably, when using the GPT-3 code-davinci-002 model with L2M prompting, it achieved an accuracy of at least 99% on the SCAN compositional generalization benchmark, compared to only 16% with CoT prompting. This demonstrates L2M's superior ability to generalize to more complex problems using fewer examples. The study includes detailed prompts and results for various tasks, showing the effectiveness of the L2M technique.

Least-to-most prompting flow

Image Credit to Denny Zhou, et al. (2023)

Key Points:

Least-to-Most Prompting: Breaks complex problems into simpler subproblems for sequential solving.

Improved Generalization: Outperforms Chain-of-Thought prompting in complex reasoning tasks.

High Accuracy in SCAN Benchmark: Achieved 99% accuracy with L2M prompting.

No Training Required: L2M utilizes few-shot prompting without training or fine-tuning.

The paper further illustrates L2M's capability through specific examples, such as the last-letter-concatenation task, where it decomposes word lists into sublists for sequential solving. This method allows the language model to handle longer lists than those seen in the prompt examples, showcasing its strength in length generalization. The experiments reveal that L2M prompting significantly outperforms CoT prompting, especially as the complexity or length of the input increases. The study also explores the SCAN benchmark, demonstrating that L2M can solve it with high accuracy using only a few examples, as opposed to neural-symbolic models that require extensive training data. Additionally, L2M prompting enhances mathematical reasoning by breaking down problems into smaller, manageable steps.

Image Credit to Denny Zhou, et al. (2023)

Image Credit to Denny Zhou, et al. (2023)

Key Points:

Task Decomposition: Demonstrated on tasks like last-letter-concatenation and SCAN.

Length Generalization: Handles longer inputs effectively compared to CoT.

Minimal Examples Needed: Solves SCAN with fewer examples than conventional models.

Enhanced Mathematical Reasoning: Effective in breaking down and solving math problems.

The research highlights the limitations of existing prompting techniques, such as CoT, which struggles with easy-to-hard generalization. L2M addresses this by leveraging a two-stage process: decomposition and subproblem solving, allowing language models to tackle more complex problems than demonstrated in prompts. The study presents various results showing L2M's superior performance across different language models and tasks. Error analysis indicates that most failures in L2M are due to concatenation errors rather than incorrect problem decomposition. This suggests potential areas for further refinement and improvement in L2M prompting.

Image Credit to Denny Zhou, et al. (2023)

Key Points:

Limitations of CoT: Difficulty with generalizing to more complex tasks.

Two-Stage L2M Process: Decomposition followed by sequential subproblem solving.

Superior Performance Across Models: Demonstrated effectiveness in various tasks.

Error Analysis: Most errors are due to concatenation, not decomposition.

The paper concludes by discussing the broader implications of L2M prompting for advancing the reasoning capabilities of language models. It suggests that L2M represents a step towards more interactive and bidirectional communication between humans and AI, facilitating more effective learning. The authors acknowledge the need for further research to enhance L2M's robustness and applicability across diverse domains. They also highlight the potential of L2M to be combined with other prompting techniques for even greater performance improvements. Overall, the study underscores L2M's promise in enabling complex reasoning in LLMs, bridging the gap between machine learning and human-like problem-solving abilities.

Key Points:

Implications for AI Reasoning: L2M enhances reasoning capabilities of language models.

Towards Bidirectional Interaction: Facilitates more interactive communication with AI.

Future Research Directions: Focus on robustness and applicability across domains.

Combination with Other Techniques: Potential for further performance improvements.

Image Credit to Denny Zhou, et al. (2023)

Explanation of Least-to-Most Prompting

Least-to-Most (L2M) prompting is a technique used to enhance the reasoning capabilities of language models by breaking down complex problems into simpler, sequential subproblems. This approach allows the model to solve each subproblem using the solutions from previous steps, facilitating a step-by-step problem-solving process.

Example:

Problem: Calculate the total cost of a meal with tax and tip.

L2M Approach:

Break down the problem: "What is the cost of the meal before tax and tip?", "What is the tax amount?", "What is the tip amount?"

Solve each subproblem: Calculate the pre-tax cost, then the tax, then the tip.

Combine solutions: Add the pre-tax cost, tax, and tip to get the total cost.

By decomposing complex tasks into manageable parts, L2M prompting helps language models effectively tackle problems that require multi-step reasoning, generalizing to tasks more complex than those demonstrated in the initial examples.

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

Felix Ohswald is the co-founder and CEO of GoStudent

Image Credit to eCampusNews

Image Credit to MIT News

Image Credit to University of Delaware

Paper of the week: Robots Should Be Slaves by Joanna J. Bryson (2010)

Spotlight on AI Tools for Academic Excellence

Smartick | Online Elementary Math For Children: We help children become more confident in mathematics through daily practice.

Gitbook: A knowledge management tool for technical teams; With GitBook you get beautiful documentation for your users, and a branch-based Git workflow for your team.

Glean: A search solution for teams to find information and important knowledge.

Conch: Your Undetectable AI Writing Assistant; Write, study, and research faster with Conch, while humanizing AI-written text

Re:amaze: An integrated customer service, live chat, and helpdesk platform for online businesses.

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply