- ScholarSphere Newsletter

- Posts

- ScholarSphere Newsletter #24

ScholarSphere Newsletter #24

Where AI meets Academia

Welcome to edition 24 of ScholarSphere

“I not only use all the brains that I have, but all I can borrow.”

Woodrow Wilson (December 28, 1856 – February 3, 1924)

Welcome to our AI Newsletter—your ultimate guide to the rapidly changing world of AI in academia. If you haven't joined us yet, now's your chance! Click that button, subscribe with your email, and get ready for an exciting journey through all things AI in the academic realm!

In today's search of AI, we'll see...

Deep Dive into AI: Expand Your Knowledge

Sentiment Analysis in the Age of Generative AI (Link)

By Jan Ole Krugmann and Jochen Hartmann (2024)

Summarized by You.com (GPT4o)

The paper "Sentiment Analysis in the Age of Generative AI" explores the impact of Large Language Models (LLMs) like GPT-3.5, GPT-4, and Llama 2 on sentiment analysis, a key task in understanding consumer emotions and opinions in marketing research. It highlights the potential of LLMs to outperform traditional transfer learning models, even in zero-shot scenarios, where models perform tasks without domain-specific training. The study benchmarks these LLMs against established models, showing that LLMs can match or exceed the accuracy of conventional methods, particularly in complex sentiment classification tasks. The authors examine how factors like data origin, text complexity, and prompting techniques affect classification accuracy. They find that linguistic features, such as lengthy words, enhance performance, whereas single-sentence reviews and unstructured social media texts lower accuracy. The paper also investigates the explainability of LLM-generated sentiment classifications, noting Llama 2's superior capability in providing detailed explanations, which underscores its advanced reasoning abilities.

Fig. 1 Empirical framework for sentiment analysis in the age of Generative AI, adapted from Hartmann et al. (2023). Light gray shading indicates the experimental scope of the present research

Image Credit to Jan Ole Krugmann and Jochen Hartmann (2024)

Key Points:

LLM Performance: LLMs can surpass traditional models in sentiment classification.

Factors Affecting Accuracy: Data origin, text complexity, and prompting techniques.

Linguistic Features: Lengthy words improve performance, unstructured texts reduce it.

Explainability: Llama 2 excels in providing detailed sentiment classification explanations.

The study conducts experiments to evaluate LLMs in binary and three-class sentiment classification tasks using over 3,900 unique text documents from 20 datasets. Results show that LLMs, particularly GPT-4, perform strongly in these tasks, demonstrating significant accuracy improvements over previous models. The paper discusses the importance of data characteristics and analytical procedures in influencing LLM performance, noting that Twitter datasets pose more challenges due to their colloquial language. Contextual prompting proves beneficial for GPT-3.5, while GPT-4 and Llama 2 show varied results. The experiments highlight the importance of prompt engineering and dataset selection in optimizing LLM performance for sentiment analysis.

Table 1 Binary sentiment classification accuracy for transfer learning models and LLMs building on Hartmann et al. (2023)

Image Credit to Jan Ole Krugmann and Jochen Hartmann (2024)

Key Points:

Experimental Setup: Evaluation on binary and three-class tasks with diverse datasets.

Performance Insights: GPT-4 shows strong performance; contextual prompting helps GPT-3.5.

Challenges with Twitter Data: Colloquial language affects performance.

Importance of Prompt Engineering: Key to optimizing LLM performance.

The paper also examines the explainability of LLMs, assessing the clarity and detail of sentiment classification explanations. Llama 2 consistently provides the most understandable and detailed explanations, surpassing GPT-3.5 and aligning closely with GPT-4. The research suggests that LLMs can bridge the gap between accuracy and explainability, challenging the traditional "black box" perception of AI models. Additionally, the study identifies critical factors influencing explainability, such as word count and the use of citations in explanations. These findings emphasize the potential of LLMs to provide insights into AI decision-making processes, making them valuable tools for both academic research and business applications.

Fig. 4 Confusion matrices for three-class zero-shot sentiment classification for GPT-3.5, GPT-4, and Llama 2

Image Credit to Jan Ole Krugmann and Jochen Hartmann (2024)

Key Points:

Explainability Evaluation: Llama 2 excels in providing detailed explanations.

Bridging Accuracy and Explainability: LLMs challenge the "black box" perception.

Influence of Word Count and Citations: Key factors in enhancing explainability.

Value for Research and Business: Provides insights into AI decision-making processes.

The conclusion underscores the transformative potential of LLMs in sentiment analysis, emphasizing their ability to deliver high accuracy without extensive fine-tuning. The paper advocates for using LLMs in business applications due to their zero-shot capabilities, which reduce the need for task-specific model development. However, it also highlights the importance of considering factors like reproducibility, cost, and ethical implications when deploying LLMs. The authors call for ongoing research to enhance LLM understanding and address challenges like bias and data sensitivity. Overall, the study provides a comprehensive framework for selecting sentiment analysis methods in the age of Generative AI, offering practical guidance for researchers and practitioners.

Fig. 6 Evaluation matrix for method selection summarizing the consolidated results.

Note: The table records average accuracy per feature without accounting for multiple interactions between dataset characteristics (e.g., number of classes and text origin). Nevertheless, it helps researchers to gauge efcacy and the relative performance of LLMs

Image Credit to Jan Ole Krugmann and Jochen Hartmann (2024)

Key Points:

Transformative Potential: LLMs offer high accuracy without fine-tuning.

Business Applications: Zero-shot capabilities reduce model development needs.

Considerations for Deployment: Reproducibility, cost, and ethics are crucial.

Framework for Method Selection: Provides guidance for researchers and practitioners.

Influence of AI LLMs on Sentiment Analysis Techniques

AI Large Language Models (LLMs) significantly enhance sentiment analysis by providing robust, adaptable methods that outperform traditional techniques in various tasks. LLMs like GPT-3.5, GPT-4, and Llama 2 leverage their extensive training on diverse datasets to perform zero-shot and few-shot learning, allowing them to classify sentiment with high accuracy without domain-specific training. This adaptability makes LLMs particularly effective in handling both binary and multi-class sentiment classification tasks, offering improvements in classification accuracy across different datasets.

Example:

Zero-Shot Sentiment Analysis: LLMs can accurately classify sentiments in online reviews without prior exposure to similar data, using natural language prompts to guide the analysis.

Improved Explainability: LLMs provide detailed, understandable explanations for their sentiment classifications, helping users understand the reasoning behind AI decisions.

The increase in sentiment analysis accuracy with LLMs is attributed to their ability to process complex linguistic features and leverage contextual information effectively, making them powerful tools for understanding consumer emotions and opinions in marketing and other fields.

You can also find extra teaching articles in our LinkedIn Page.

Join us Now in ScholarSphere

Mastering AI: Prompt Perfection

Better Zero-Shot Reasoning with Role-Play Prompting (Link)

By Aobo Kong, et al. (2023)

Summarized by You.com (GPT4o)

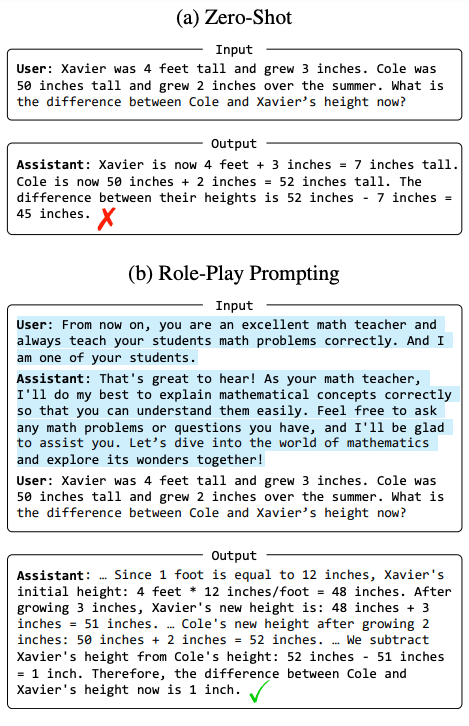

The paper "Better Zero-Shot Reasoning with Role-Play Prompting" explores how role-play prompting can enhance the reasoning capabilities of large language models (LLMs) like ChatGPT. Role-play prompting involves assigning a specific role to the LLM, such as a math teacher or a judge, to guide its responses in a more contextually aware and accurate manner. This study evaluates role-play prompting against standard zero-shot and zero-shot Chain-of-Thought (CoT) prompting across twelve diverse reasoning benchmarks. The research demonstrates that role-play prompting significantly improves accuracy in tasks like AQuA and Last Letter, showing a rise from 53.5% to 63.8% and 23.8% to 84.2%, respectively. The authors propose a two-stage framework for role-play prompting: first, constructing task-specific prompts, and second, using these prompts to guide responses to reasoning queries. This approach not only outperforms baseline methods but also serves as an implicit trigger for the CoT process, enhancing the LLM's reasoning capabilities. The paper provides insights into the design and effectiveness of role-play prompts in improving LLM performance.

Figure 1: Examples of ChatGPT with (a) zero-shot and (b) role-play prompting. The role-play prompts are highlighted.

Image Credit to Aobo Kong, et al. (2023)

Key Points:

Role-Play Prompting: Assigns roles to LLMs to guide responses contextually.

Improved Accuracy: Significant gains over zero-shot and zero-shot CoT methods.

Two-Stage Framework: Involves prompt construction and guided response elicitation.

Implicit CoT Trigger: Enhances reasoning capabilities in LLMs.

Role-play prompting capitalizes on the LLMs' ability to embody various personas, ranging from fictional characters to specific roles like teachers or advisors. By doing so, it enriches the interaction and tailors the LLM's responses to the assigned role, leading to more accurate and contextually relevant outputs. The study also compares role-play prompting with Zero-Shot-CoT, a method that prompts models to "think step by step." While Zero-Shot-CoT can stimulate reasoning in some cases, role-play prompting proves more effective across a broader range of tasks. This is evident in tasks where spontaneous CoT generation is challenging, as role-play prompting effectively stimulates the reasoning process. The research highlights the potential of role-play prompting to be used as a baseline for reasoning tasks, offering a new direction for enhancing LLM performance through innovative prompting techniques.

Figure 2: The two-stage framework of our proposed role-play prompting. The role-play prompts are highlighted.

Image Credit to Aobo Kong, et al. (2023)

Key Points:

Persona Embodiment: LLMs adopt roles for better contextual interaction.

Comparison with Zero-Shot-CoT: Role-play prompting is more effective across tasks.

Stimulating Reasoning: Effective even where spontaneous CoT is difficult.

Baseline for Reasoning: Offers a new standard in LLM prompting techniques.

The researchers systematically evaluate role-play prompting on various reasoning tasks, demonstrating how it can serve as a more effective implicit trigger for chain-of-thought processes than existing methods. The study uses a two-round dialogue process to immerse the LLM in its assigned role, enhancing its framing and response quality. In tasks like commonsense reasoning and symbolic reasoning, role-play prompting consistently outperforms zero-shot and CoT methods. The findings suggest that this method can be particularly useful in tasks where structured logical reasoning is required, thereby increasing the LLM's ability to generate coherent and accurate responses. The paper concludes that role-play prompting not only enhances reasoning capabilities but also paves the way for future research in integrating role-playing techniques with LLMs.

Table 2: Accuracy comparison of Role-Play Prompting with Few-Shot-CoT, Zero-Shot, Zero-Shot-CoT on each dataset. In the rows “CoT in Zero-Shot", the check mark denotes that ChatGPT can spontaneously generate CoT on the corresponding dataset under the zero-shot setting, while the cross (wrong symbol) denotes otherwise.

Image Credit to Aobo Kong, et al. (2023)

Key Points:

Two-Round Dialogue Process: Deepens role immersion for better responses.

Outperformance in Reasoning Tasks: Superior to zero-shot and CoT methods.

Utility in Structured Reasoning: Useful in tasks requiring logical coherence.

Future Research Directions: Encourages integrating role-playing with LLMs.

The paper emphasizes the importance of role selection and prompt design in maximizing the effectiveness of role-play prompting. It recommends choosing roles that naturally align with the task requirements, such as a math teacher for arithmetic problems. The study also highlights the impact of prompt structure, suggesting that two-round prompts with complementary descriptions enhance model immersion and performance. Additionally, experiments with different LLMs, including open-source models, show that role-play prompting generalizes well across various architectures and scales. The authors call for further exploration into role-play prompting to unlock the full potential of LLMs in diverse application scenarios, advocating for more research to refine and expand on these techniques.

Key Points:

Role Selection and Prompt Design: Crucial for maximizing effectiveness.

Two-Round Prompts: Improve immersion and performance.

Generalization Across Models: Effective in various LLM architectures.

Call for Further Research: Encourages exploration of role-play techniques.

Explanation of Role-Playing Prompting

Role-Playing Prompting is a technique where an AI language model is assigned a specific role, such as a teacher or advisor, to guide its responses in a way that aligns with the assumed persona. This method aims to enhance the AI's reasoning and interaction quality by providing context and structure to its responses.

Example:

Math Problem: Instead of simply asking the model to solve a math problem, you prompt it by saying, "From now on, you are a math teacher. Please explain how to solve this problem to one of your students."

Improved Response: By assigning the role of a teacher, the model is encouraged to provide a more detailed and structured explanation, leading to better understanding and accuracy in the solution.

Role-Playing Prompting is considered more effective than zero-shot prompting because it implicitly triggers a chain-of-thought process, allowing the model to generate responses that are contextually rich and logically coherent. This method leverages the model's ability to embody personas, thereby improving its performance on tasks requiring nuanced reasoning and interaction.

For reading the full text click here

Full getting access to our Prompt Inventory check here

Don’t forget to visit our LinkedIn Page

Cutting-Edge AI Insights for Academia

UC San Francisco (UCSF) Department of Medicine:

Generative AI in the DOM: Cutting-Edge Applications and Innovations

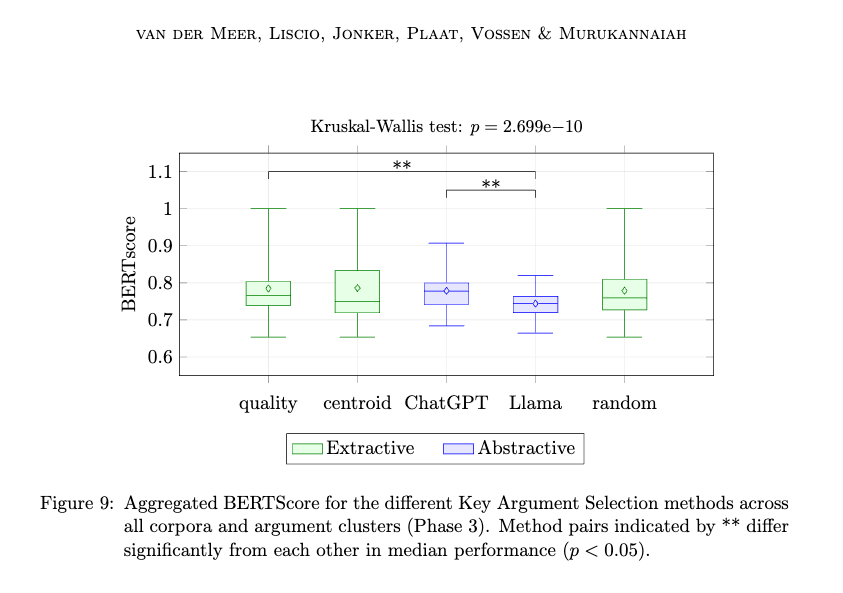

Paper of the week: A Hybrid Intelligence Method for Argument Mining by Michiel van der Meer, et al. (2024)

Spotlight on AI Tools for Academic Excellence

Sider - Chrome Extension: Assists in reading & writing on any webpage, Supports chats with links, images, PDFs, GPTs, etc. , Integrates GPT-4o & GPT-4o mini, Claude 3.5 & Gemini 1.5 Flash/Pro, Llama 3.1 70B/405B, Free to use

Deepgram: Deepgram's voice AI platform provides APIs for speech-to-text, text-to-speech, and language understanding. From medical transcription to autonomous agents, Deepgram is the go-to choice for developers of voice AI experiences.

Brandwatch’s AI: Iris is your AI companion, using a unique combination of proprietary AI and integrations with large language models to help you unlock the true power of generative AI. From research to content creation, Iris is here to help.

Opentext: Get the knowledge, insight, and confidence that can only come from being information driven with superior data management

Brand24: Get AI-powered access to mentions across social media, news, blogs, videos, forums, podcasts, reviews, and more.

For finding more featured and selected AI tools & apps, please subscribe to ScholarSphere Newsletter Series

Reply